AI and Structured Data: Optimizing Performance in Financial Markets

Posted Monday, May 25Highlights from the May 15, 2026, AI and Structured Data Forum, featuring the Securities and Exchange Commission, the AICPA, Auditchain, Broadridge Financial, the Center for Research toward Advancing Financial Technologies (CRAFT), Crowe LLP, Deloitte & Touche LLP, PwC, the Global LEI Foundation, Novaworks LLC, Stevens Institute of Technology, UBS Asset Management, and XBRL US. Sponsored by Crowe LLP and Novaworks LLC.

Executive Summary

The integration of Artificial Intelligence (AI) and structured data represents a critical juncture for the financial, accounting, and regulatory sectors. As outlined in the proceedings of the AI and Structured Data Forum, the primary challenge is ensuring that AI is not only powerful but also trustworthy and reliable. The consensus among industry leaders from the SEC, major accounting firms (Deloitte, PwC, Crowe), and academia is that structured, standardized data (such as XBRL) is the essential foundation for accurate AI performance.

Key takeaways include:

- Efficiency and Accuracy: While AI infrastructure is currently heavily subsidized, the rising costs of processing (tokens) necessitate the use of structured data to allow models to operate more efficiently and consistently.

- Human in the Loop: In audit and assurance, AI serves as an accelerator for "grunt work," but human judgment and professional skepticism remain paramount. Accountability cannot be delegated to a machine.

- Data Quality Risks: The "garbage in, garbage out" principle remains the primary threat. Low-quality, unstructured data lead to AI hallucinations and erroneous financial reporting.

- Talent Evolution: The traditional apprenticeship model in accounting is being disrupted. Firms must now "manufacture" audit managers faster, shifting the focus from manual data entry to high-level critical analysis.

The Symbiotic Relationship: AI and Structured Data

The effectiveness of Large Language Models (LLMs) in finance is directly tied to the data they ingest. Machine learning functions optimally when sourcing data that adheres to a structured semantic data model.

Overcoming AI Limitations

AI is driven by mathematics and statistics, making it prone to hallucinations and probabilistic errors. To mitigate these risks, structured data provides:

- Contextual Metadata: Machine-readable data that includes set definitions and relationships (e.g., how "Cash" rolls up into "Total Assets").

- Machine Understandability: Moving beyond just "machine-readable" (PDF/HTML) to "machine-understandable" data that allows AI to grasp the accounting codification behind a tag.

- Reduced Costs: Standardized data reduces the computational friction of AI processing, which is increasingly vital as the price of tokens and data center infrastructure rises.

Transformation of Audit and Assurance

AI is being deployed across the accounting profession as a "digital intern" or accelerator, particularly in the audit cycle.

Current Applications in Audit Firms

| Phase of Audit | AI/Machine Learning Application |

|---|---|

| Risk Assessment | Identifying anomalies and outliers in massive datasets much earlier in the process. |

| Execution | Matching millions of transactions, summarizing complex narratives from tables, and performing historical roll-forwards. |

| Control Testing | Evaluating the effectiveness of internal controls and identifying reconciling items in real-time. |

| Reporting | Drafting initial audit committee reports and financial statement footnotes based on quantitative data. |

The "Human in the Loop" (HITL) Requirement

Despite the speed of AI, firms maintain a strict policy of human accountability:

- Unassisted Self-Review: Practitioners are required to check AI outputs back to the original provided information source.

- Professional Skepticism: Auditors are "wired to be skeptical." They must treat AI outputs with the same "trust but verify" mindset applied to client-provided data.

- Accountability: Partners remain legally and ethically responsible for the audit opinion; the AI is merely a tool for acceleration, not a replacement for judgment.

Challenges, Risks, and the Future of Talent

The Knowledge and Training Gap

- The "Digital Intern" Risk: Younger practitioners may view AI as an "answer machine" and accept its output as "gospel" without sufficient skepticism.

- Micro-Learning: Firms are pivoting to required interactive training where partners and staff must use AI tools in simulated environments to understand their mechanics and limitations.

- Trust Issues: Paradoxically, some younger professionals are hesitant to use AI, fearing it might be viewed as "cheating" based on their academic backgrounds.

Impact on the Workforce

The traditional "triangle" apprenticeship model (many juniors doing manual work supporting a few partners) is shifting toward a "diamond" shape.

- Acceleration of Experience: AI automates "grunt work" (licking stamps, sending confirmations, manipulating spreadsheets).

- Focus on Analysis: The industry now needs to "manufacture audit managers" in three years rather than five, focusing on students who possess high-level critical thinking and technology skills from day one.

Regulatory Landscape and Data Quality

The Securities and Exchange Commission (SEC) and other bodies are actively managing the intersection of AI and financial disclosure.

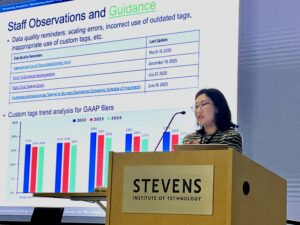

SEC Office of Structured Disclosure (OSD) Roles

The OSD focuses on making data accessible and easy to use through several initiatives:

- Taxonomy Management: Designing taxonomies and validation rules (e.g., preventing "fat finger" errors like a small company reporting a trillion dollars in public float).

- Free Datasets: Publishing 15 free datasets, including financial statement and notes data, to support academic research and investor analysis.

- AI Use Cases: Developing tools to summarize thousands of public comment letters and building a "semantic layer" for the Enterprise Data Platform (EDP).

Legislative and Policy Trends

- Financial Data Transparency Act (FDTA): A bipartisan bill aimed at further structuring corporate and eventually municipal disclosures.

- Clarity Act: Legislation enables operationalizing disclosure taxonomies for digital assets.

- SEC Reform: While some market participants suggest reducing XBRL burdens to save costs, regulators emphasize that AI’s accuracy depends on the very structured data those mandates provide.

Emerging Frontiers: DeFi and Tokenization

The forum highlighted a rapid "arms race" in decentralized finance (DeFi) and the tokenization of Real-World Assets (RWA).

- Interoperability: Communication between blockchains is difficult without data standards. Standardized data facilitates reduced friction in decentralized applications.

- Tokenized Treasuries: There has been a "hockey stick" growth in tokenized U.S. Treasuries, which have grown from a negligible amount to over $1.2 billion in a short period.

- Market Participation: Major players (e.g., BlackRock, Franklin Templeton) are entering the tokenization space, driving a need for consistent, machine-readable regulatory disclosures.

Comment

You must be logged in to post a comment.